SLOs for Orff Inspired Teaching

Student Learning Objectives: A Manageable “How To” Guide from the Trenches

The process of crafting an SLO when kept logical and reasonable, can really be a positive force in your instruction and beneficial for your students. Don’t let the structure, all of the “official-ness” of the forms, and evaluation aspects overwhelm you. You are doing a good job, this is an opportunity to prove it in terms educators outside of our discipline can understand!

How to mesh an active music-making environment with an SLO and LDR assessment requirement:

SLO stands for Student Learning Objective. Don’t become consumed by the vocabulary from the world of lawyers and law makers. We can simplify the jargon to teacher-friendly language.

Step 1: Choose the group of students you are going to assess.

SLO protocol often asks teachers to choose one section or class. For most music teachers this is pretty simple—it’s usually the best-behaved, “favorite” class or the class we see most often.

Step 2: Set goals for your students’ growth or mastery. You may already have successful assessments in mind from past teaching, use them and don’t reinvent the wheel! If you have not used formal assessment regularly, go slow, be patient, and purposeful. You’ll find good options as you explore possibilities.

You will need to make some decisions about what your students should be doing to fulfil the requirements of the SLO. The idea is to prove that you are teaching and the students are learning. Returning to these three words – Student Learning Objective – can provide clarity when you find yourself “lost in the forest.” The guidepost is the objective. It is the reason we are providing instruction to this group of students. We are creating guiding experiences to develop mastery of _____ skill, or develop _____ ability.

The metric categories some SLO models use are targets like Growth, Mastery, or Growth & Mastery. I prefer to choose mastery because in order to attain aesthetically pleasing results in music mastery is essential. In your teaching situation growth may be preferable. I consult my curriculum and the standards by which my curriculum is structured. Using those resources as a guide, I select learning objectives and figure out what the “end-goal” will be. It helps to consider this process as a road trip and the end goal as a final destination. What does the “city” or “end goal” where the final assessment will occur look like?

Step 3: Create the assessment instrument by which you will measure student growth, mastery or both.

I design assessments that will accurately demonstrate that my students have learned and that I have helped them show growth or reach mastery. This can be where confusion sets in. Return to “Student Learning Objective.” Students will master _____. Use this statement: “I can measure student mastery or growth through this data:_____.” Data can be compiled through the use of rubrics that assess active demonstration of skills and abilities or numerical scoring schemes applied to a physical product like a quiz, worksheet, test, etc. Rubrics can also be used to assess and measure growth when students create physical products like compositions, or multi-component projects.

If you choose growth, you’ll have to establish some kind of baseline data which will provide the mechanism for growth to be proven. This can be done in the form of a pre-test. In my experience, it works well to administer the final assessment prior to any instruction on the targeted concept or skill. If the students score highly, you’ll have a harder time proving growth.

Step 4: Create a timeline for the process.

Once I have created my final assessment, I decide how instruction will align with these parameters, create a plan to support my students in meeting the objective and put a timeline in place. Again, use the road trip analogy. Which stops will we make during the journey to support our successful arrival at the final destination? When is the appropriate time and place along our journey to make those stops? If we need to be prepared for “Final Assessment” Town by April 30th, we should probably visit the cities of 1.____, 2.____, and 3.____, where we will practice and process skills and acquire increased ability and/or knowledge along the way. The plan will take us to 1.______ by November, 2._____ by January, 3._____ by March, etc. Sometimes this approach can be easily related to Backward Design or the ADDIE model of instructional design.

Getting Organized

I like to use this graphic organizer to help sort out my ideas away from and prior to filling out the “official forms.”

I create three SLO assessments for one group of students. I use one for playing instruments, an understanding of chord and level drones. The second assessment demonstrates mastery in decoding rhythms to prove an understanding of iconic music literacy. The third is a movement assessment based on successfully performing a folk dance with correct dance figures, vocabulary and appropriate partner manners.I find that once I have put this informal plan together, I am more successful completing the “official” forms.

I use the organizer above to create my three assessment plans. I refine my ideas throughout the process and stick to “big ideas” as I map out my plan.

The Official Forms

Now that I have sketched out my plan I can take it to my official form. Although there are variations in SLO documents from state to state and even district to district there are a lot of similarities. While this might not look exactly like your SLO documentation it may provide some insight and inspiration when you complete your documents. Here is an example of a Pennsylvania SLO overview document:

The goal statement was one that our music department agreed upon. It is general enough to apply to any SLO assessment plan we might select. Next, I filled in the standards that are guiding instruction.

A rationale for your placing importance upon this assessment plan may be required. Here is mine: “Students should exhibit age-appropriate skill development in basic ensemble skills, decoding rhythmic and melodic notation, and effectively respond to music as a member of a community folk dance experience.” This should be general enough to cover the assessments, but specific enough to be meaningful.

Performance Measures or something like these will probably come next. This is a fancy way of saying what the name of the assessment plan is. Here is the example from my SLO.

The Purpose is often outlined next. This is like an extension of the rationale for each Performance Measure (PM). The metric selection is on the right. I choose mastery.

I chose growth and mastery for my first SLO, but regretted it because in order to form a baseline, I had to ask students to perform a task using vocabulary that they had never even heard before. It was awkward for all of us. Imaging asking a group of 3rd graders to perform a level drone in C pentatonic to accompany the melody I play. They just stared at me…

Next the administration frequency is usually required. It is usually acceptable to select to do these once per year. You may want to repeat your assessment in each marking period to show growth.

Performance Tasks

It may be easier to shift one’s focus next to the Performance Tasks, or the separate “official forms” for each assessment. If the details in the examples seem overwhelming, go back to your informal graphic organizer to get re-centered as you work on your own SLO. The important thing to keep in mind is that there is a need to outline your specific plans for each Performance Measure. In this example I fill out the specific goals I am expecting my students to achieve and how I will be assessing them in more specific terms. The forms will usually require you to reiterate the essential information from the overview, and then elaborate on each Performance Task.

Once the basic information is re-supplied, this information will be probably be required in some form:

You will probably have to provide some form of information about the student contributions. Here is an example:

I don’t record the “pretest” as I once did, but I replace this with an exploration of what the students think it might be like to accompany a song in the manner of an “always the same” using C and G, and “Jumping from long bars to short bars” C and G.

The Rubrics

Your plan for scoring is the next thing that you’ll need to outline. Here is an example of a rubric that I created for chord and level drone assessments.

The Fine Print

Next a series of explanations regarding process are outlined. These come from the actual forms for Pennsylvania. The italicized words are my responses to the questions the form poses.

- Administration (TEACHER)

a. Administrative frequency: How many times will the student be given this task within an identified timeframe? Twice; Once in first trimester (pre-test), and once in last trimester (Summative).

b. Unique task adaptations and/or accommodations: How does the task change in either presentation, response options, setting, etc. to accommodate students with disabilities, English language learners, etc.? The task changes minimally for various learners; however, the guidance to performing the task will be adjusted as is necessary for student to arrive at essential task performance capabilities.

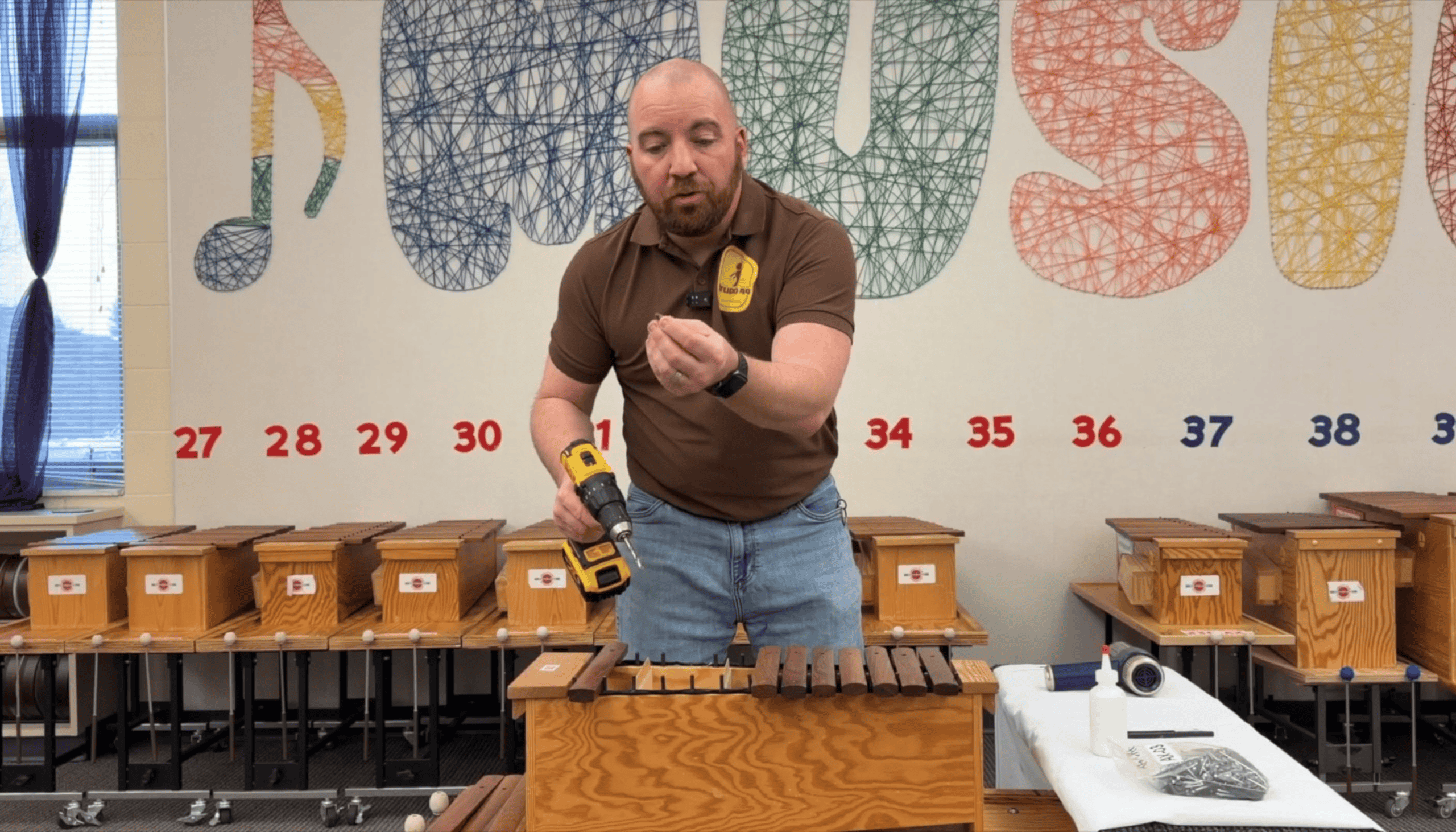

c. Resources and/or equipment: What equipment, tools, text, artwork, etc. is needed by the student to accomplish the task? What additional personnel are needed to administer the task? Equipment as outlined in the Performance Task Framework 1.c. No additional personnel should be needed to administer the task.

- Task Scenarios, Requirements, Process Steps, Products (STUDENT)

a. Task scenario: What information is provided for the student that provides the context necessary to create a response, project, produce, demonstration? Teacher demonstrations and student-to-student reinforcement interactions will take place in an ongoing manner. Finally, a short performance experience in a small group will occur.

b. Requirements: Given the scenario, how are the task requirements articulated to the student in order to establish key criteria by which performance is evaluated? Task directions will be articulated in plain language and through “watch and copy” demonstrations which the students will then perform themselves without a teacher model to copy. Which requirements are implied, thus requiring deeper understanding of the content being assessed? Which criteria are stated explicitly in order to adhere to the time constraints, product parameters, etc.? The requirements of playing in a cohesive ensemble will be implied, i.e. the students will need to play together in such a way that all are playing the same notes according to one central pulse that unifies the sound of each player.

c. Process steps: What guidance expresses the sequence of events, steps, or phases of the task? Guidance will be expressed initially to the whole class and then as needed to individuals who should need clarity and reinforcement of specific aspects of the task(s). How are extended (multiple days) timelines and demonstrations of progress articulated? Extended timelines will not be articulated as the task is easily captured within a short time-frame.

d. Products: Given the activities within the task, what products, demonstrations, or performances are expected during and/or at the end of the process? Products yielded will be the instrumental accompaniment upon barred instruments in either a chord, or level drone while students (same or others) are singing. What information is provided about the criteria used to judge student calculations, products, demonstrations, performances, etc.? Specific outlines of what an excellent model will encompass are provided to the students, as well as possibilities for improvement when deviations should present themselves.

3. Scoring (TEACHER)

a. Scoring tools: How does the rubric classify different levels of performance, student work, etc.? Advanced, Proficient, Basic, In Progress. How is the overall score attained? Scores are attained through teacher observation and ratings on rubrics. How well are multiple dimensions aligned to the standards? Multiple dimensions are aligned to standards as well as possible. The task encapsulates a very small scope in terms of dimension which are the specific target standards addressed and referenced above.

b. Scoring guidelines: How are the steps that are used to evaluate student products, performances, etc., articulated? The scoring teacher will observe students performing the task and assign them ratings based on the rubric above. What guidance is provided to assign scores for incomplete work? For incomplete work, or absent students, no scores will be given and they will be made up in subsequent classes. How are additional scoring personnel identified and trained? No additional scoring personnel will be necessary. Given an overall score or classification/performance level, how are examples, models, or demonstrations provided? Examples and models will be provided through the teaching process by both students and teacher.

c. Score/Performance reporting: How are overall results reported back to the student? Overall results will be shared with the students in reference to the rubric. How are scored results reported for all students? A group score record will be kept in the form of an Excel spreadsheet.

These are an example from one SLO that works for me. You will modify your responses to “fit the mold” in your school with your students.

Next, we’ll have to return to the overview and insert the Performance Indicators and Targets into the Overview. Basically, just fill in the blanks with the information from the Performance Task Framework.

Performance Indicator Targets

The PI Targets are the direct correlation between the goal statement and the assessment instrument. This is the essential way that your assessments will relate to your SLO Goal Statement. Here are some examples.

Once you’ve filled out this overview, you are ready to submit both the Overview and the Performance Task outlines and follow the plan you’ve laid out. The important thing to remember is to keep the experience of the student forefront in your mind. If the students know they are taking a “test,” you probably haven’t been artful enough in the design and integration of the SLO process into your classes.

What is an LDR?

In some districts a Locally Designed Rubric (LDR) Assessment is a requirement as well. This is different than an SLO only in that it is a broader “survey group.” In the SLO, we are supposed to select one class section in one grade level. In the LDR, it is required that the teacher use an altogether different grade level or course than their SLO group, and it should include all the classes in that grade level. So, where an SLO should be say 25 students, the LDR, could be 60-100 students. All other parameters remain the same as the SLO model. Essentially, this is a broader application of the SLO but instead of 3 assessments, it is only one.

Big Takeaways:

- Choose from assessments you are already using if possible. Don’t reinvent the wheel.

- If you aren’t doing a lot of formal assessments, start simply, reflect, and refine.

- Be kind to yourself through this process! If you are trying your best, you will find positive outcomes and you will improve!

- Create a manageable assessment plan and timeframe.

- Collaborate with a colleague or several colleagues if possible. Divide and conquer!

- LDR Assessments are similar to SLOs in that they are only one assessment vs. something like three, but spread out to all the similar sections a teacher instructs.

- Once you find a successful approach to your SLO and LDR, you can reuse it in future years.

While there are certainly more fun ways to spend our time, I do believe this can be a worthwhile way for music educators to show our value and worth through the same lens as our classroom teacher colleagues. Is it the best language for us to use in communicating our value? Probably not. The bottom line is SLO’s are part of our professional world and we want to make them as manageable and meaningful as possible.

I sincerely hope this post has been helpful to “Make your SLO Process Work”. Please comment below to ask questions and share SLO’s and tips that have been successful for you.

7 Responses

Thanks for taking the time to explain the process you used. I’m in the process of creating my SLO for this school year, and it can be challenging trying to figure out how to make what we do as music teachers make sense on this restrictive format.

Thank you, Rod. I know it can be so overwhelming when you are sorting this all out alone! I’m glad this post was helpful!

I agree with Rod. Thank you for your calm, methodical explanation of an onerous process. The example is helpful, as is your advice to take an assessment we are already using, in order to make this task less intimidating.

Like the rubrics & assessment ideas.

Wondering about world music coverage. Just finalizing a book called “Come Calypso.”

Drue,

Thank you so much for this very detailed guide! Highly appreciated!

Hi Drue,

Compared to what I have to do for an SLO in Wisconsin, your plan seems much broader than something I would need to do.

I quote: “I create three SLO assessments for one group of students. I use one for playing instruments, an understanding of chord and level drones. The second assessment demonstrates mastery in decoding rhythms to prove an understanding of iconic music literacy. The third is a movement assessment based on successfully performing a folk dance with correct dance figures, vocabulary and appropriate partner manners.I find that once I have put this informal plan together, I am more successful completing the “official” forms.”

We must state our SLO using SMART Criteria:

Is each of these criteria included in your SLO goal statement?

Specific

Measurable

Attainable

Results-based

Time-bound

An example of mine from last school year is:

“75% of Ms. Emmons’ third grade class who scored at 50% or lower on the Quaver pre-assessment test taken in September 2017 will increase their scores by 30% or more on the post-assessment test taken in May of the same school year.”

I don’t see any specific SLO statement like that in your examples. Are the expectations just different in your state? I find it difficult to lock in to showing numerical, percentage-wise growth in our teaching area. Thoughts?

Jim Frisque

My apologies for this delayed response! I do not have to use SMART criteria. And your assessment sounds like an instrument for measuring growth which wasn’t my focus. You could easily shift the assessment to growth in the way you outlined. So yes, growth happens in our state SLOs but I don’t usually focus the assessment there.